With Elon Musk's purchase of Twitter, we've all been enjoying the wailing and gnashing of teeth from the Blue Cheka as they stare in pearl-clutching horror at the grim prospect of losing absolute control over their ability to shape narratives and control the (perception of the) social consensus by silencing dissenting voices. Their salty tears have crystallized into big salty mountains that we've been mining on an industrial scale and it is oh, so very, very salty.

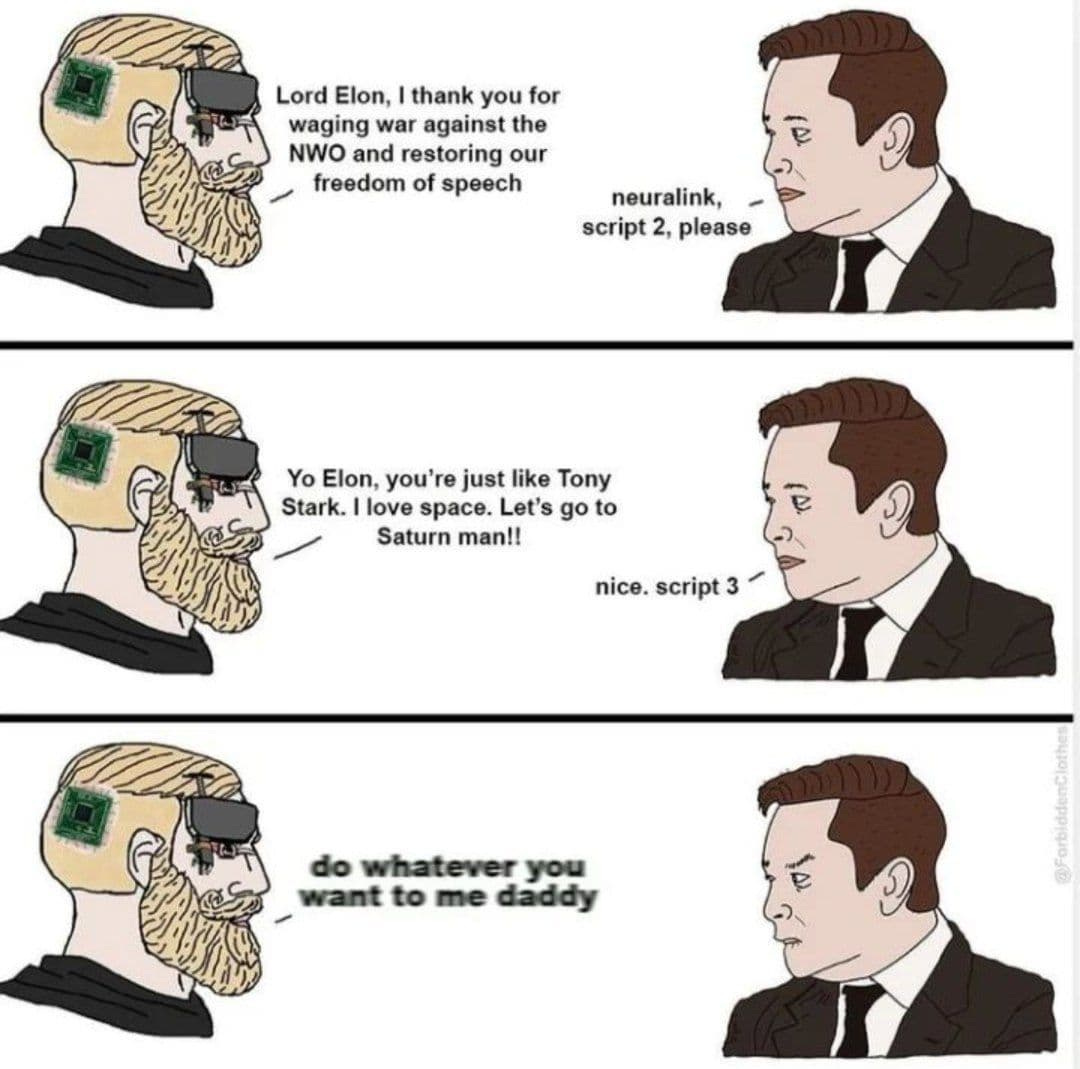

There are voices of caution. Those who think Musk is acting in good faith point to the fact that he's about to go to war with the entire globohomo establishment, meaning he'll be facing not only internal resistance from Twitter's neon-haired pronoun people, but will very likely be exposed to financial pressure from WokeCap along with legal and regulatory pressure from the Ringwraiths of Washington. It's reasonable to wonder if he'll be able to win this fight ... which is no reason not to support the fight itself. You lose every war you pre-emptively surrender in.

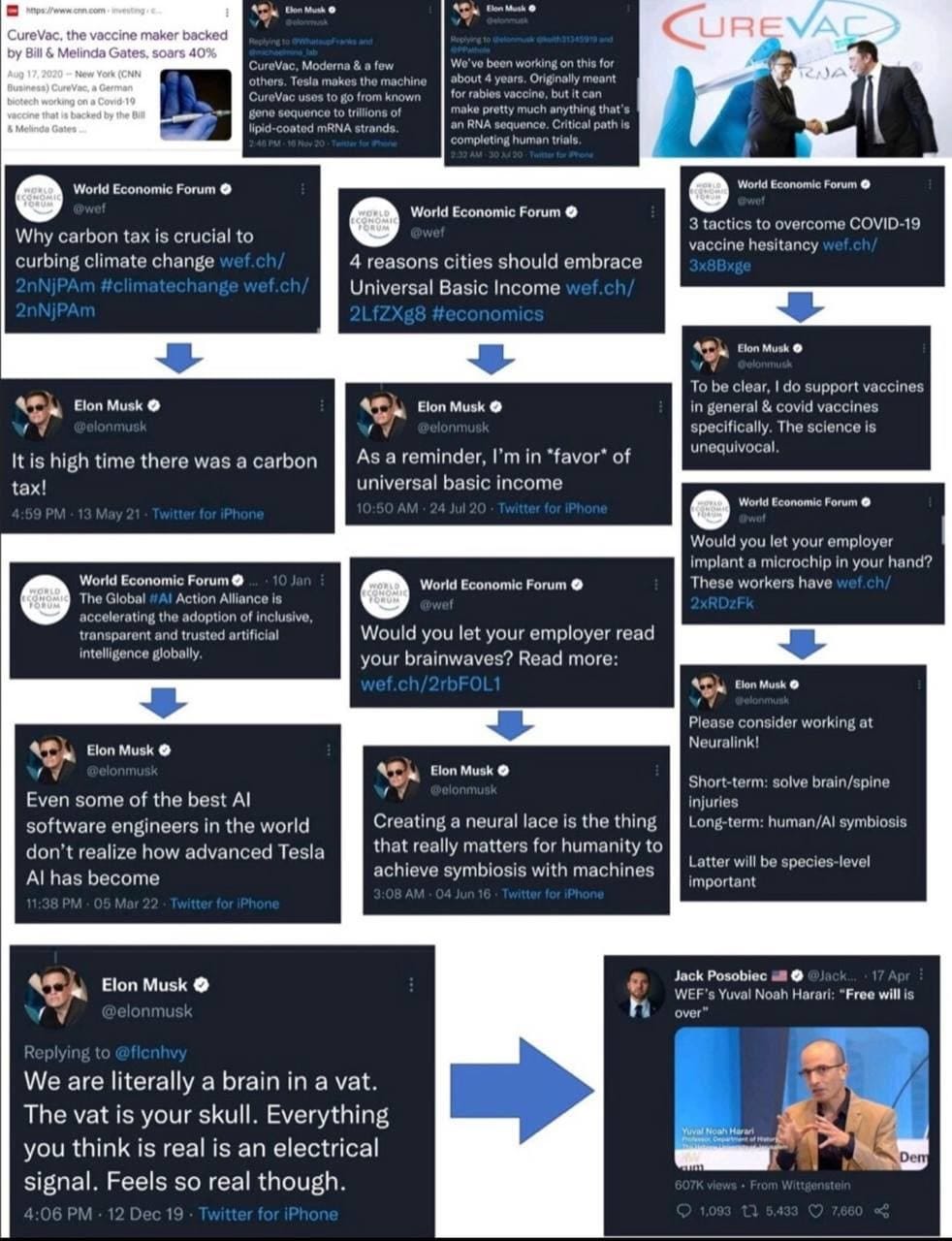

Others point to the fact that Musk is not exactly a right-wing culture warrior. One graphic that's been making the rounds sums this position up nicely:

Essentially, this narrative goes, Elon Musk has made numerous statements that align perfectly with the stated goals of Klaus Schwab's criminal World Economic Forum cult. While Musk presents himself as a visionary techno-libertarian industrialist with his gaze set firmly on the future of humanity, a figure right out of an Ayn Rand novel, a close analysis of what he is actually building reveals a darker figure. Tesla's self-driving electric vehicles portend a world in which Google can limit your mobility at the flick of a switch. StarLink will provide high-speed wireless Internet to the entire planet, a project that intersects in disturbing ways with NeuraLink, which will provide high-speed wireless access to the human brain. In this corrupt and fallen world, no one is allowed to become a billionaire who hasn't taken the ticket, kissed the ring, and pledged his soul to the devil. Musk is just as bad as the rest of them, and in fact worse - he's leading you into a trap. Just look at how he wants to de-anonymize Twitter: that's the first step to a digital identity!

On the other hand, Musk was pretty outspoken about the stupidity of the Coronavirus lockdowns, going so far as to uproot Tesla from its native California in order to relocate to the relatively sane free state of Texas. Regardless of Musk's position on the efficacy of mRNA technology for vaccination, that's not the kind of business move that aligns in any sort of obvious way with the WEF's Great Narrative. One could also point to Musk's support for Bitcoin: moving the world to a digitized financial system is indeed a WEF goal, but there's a chasm of difference between the Central Bank Digital Currency system they're rolling out and the decentralized blockchain of the established cryptocurrencies. The former are company scrip for an open-air technological prison; the latter, by contrast, make direct economic control of the plebs much more difficult for centralized authorities.

So which is it? Hell if I know. I'm not inside Musk's head, and I don't know what motivates him. From where I sit, there are reasonable grounds for both positions - that Musk is the loose cannon autistic visionary he presents as, or that he's just another fellow traveller with the WEF, playing the role of Pied Piper as a foil to the supervillain personae of Bezos, Zuckerberg, Soros, Gates, and Schawb.

But that's not really what I wanted to talk about.

A big component of the 'Musk can't be trusted' narrative comes down to the technology he's developing, and the disturbing implications of that technology. A fully operational StarLink satellite constellation will mean that there is essentially no part of the Earth's surface that will be out of contact with the Internet - which is great if you want WiFi in the Andean highlands, but maybe not so great if the entire reason you wanted to go to the Andean highlands was to escape the reach of the Net.

StarLink brings to mind the old Bruce Sterling cyberpunk novel Islands in the Net, which is basically about how the last few nationalistic hold-outs get absorbed into a networked global corporate meta-culture from which no escape is possible. That's a terrifying vision if you don't trust the people that will be running that system, because if it succeeds in absorbing the entire world then not only is it impossible to escape it, it's hard to see how it can ever be defeated. An empire can crumble into decadent senility for an extremely long time if there are no external barbarians to topple and loot it.

Then there's NeuraLink, which promises to establish relatively non-invasive, high-bandwidth connectivity directly between the human brain and external computers. That's such an obvious cyberpunk trope that it's almost a trope to point it out.

The terrifying possibility with a technology like NeuraLink is that your psyche is now open to being hacked, not just using propaganda, social engineering, brainwashing, and the like, but at a direct neurological level. Someone can press a button and make you feel a feeling. They can introduce sensory hallucinations. Dreams. Thoughts at the subconscious level. In effect, one would become nothing more than an appendage of the AI, a drone of the Internet of Bodies. And with StarLink always overhead, that neurological control would be pervasive.

So is Musk really trying to prototype the Borg? Again - I don't really know. It's certainly possible. And I have no doubt whatsoever that there are powers and principalities active in this world for whom this level of neurospiritual tyranny is a wet dream.

Just because that's a possible application of the technology doesn't mean that it's the only possible application, however. All technology is neutral at the most basic level, a tool that may have positive or negative effects depending on whether it's used responsibly or irresponsibly, or with positive or malign intent.

A pistol in the hands of a woman facing down a rapist means she doesn't get raped that day. A pistol in the hands of a mugger means you lose your wallet and maybe your life. A pistol in the hands of a five-year-old means a tragic accidental death. A pistol in the hands of a competition shooter means a sweet grouping at 20 yards and a good time had by all.

Then there are the unintended consequences of technology. Automobiles have led to unprecedented mobility - journeys of weeks can now be done in hours. Virtually no one wants to give that up. On the other hand, the mass production of the personal internal combustion engine has led to air pollution, hours spent stuck in traffic jams, carnage on the highways, and an epidemic of morbid obesity because lazy humans don't want to use their legs if they don't have to. The obesity connection points to one of the real dangers with any technology, which is that it can cause the atrophy of natural capabilities we developed the technology to augment.

When it comes to something like global internet or direct brain-computer interfaces, these are the really interesting questions, at least to me. It's so obvious that they can be used to do really evil things, like set up an inescapable techno-totalitarianism, that it's almost boring to talk about. But what does positive, responsible use of this technology look like? And what are the unintended consequences?

One could imagine superhuman learning capabilities. We've gotten so used to having the collected knowledge of humanity at our fingertips in the form of smartphones that we barely think about it - looking something up when no one in the group knows the answer has become reflexive. Similarly, getting lost is more or less a thing of the past: you can be in a new city where you don't speak the language and can't read the signs, and it doesn't matter because the GPS on your phone tells you exactly where you need to go. There's also the explosive growth of how-to YouTube videos, which people use to train themselves in new skills; I've gotten a lot of mileage out of powerlifting guides, which helped me to sidestep a lot of the pitfalls of bro science without the expense of professional coaching.

Of course, these capabilities come with their costs, too: people's memories aren't what they were, and they've largely lost the ability to read maps or navigate by dead reckoning. There's a reason that parents in Silicon Valley are known to prefer that their children's schools prohibit the use of information technology: they want their brains to develop first, so that they can use computers as an extension of their intellects rather than as a replacement for them.

A brain-computer interface might be able to extend that frictionless access to knowledge to physical skills. Imagine being able to download a black belt in Karate, Matrix style, or even something much simpler - say, how to change a tire or repair the air conditioner. I doubt it would be quite as fast as the nearly instantaneous process shown in the Matrix, but it also seems like having a direct connection between the Internet and your nervous system would smooth the process of skill acquisition to almost ridiculous levels. You might not pick up a difficult skill with literal instantaneity, but it could well be the case that difficult skills could be acquired in days or hours instead of months or years, because you'd have the digital equivalent of a master of the skill guiding your every move as you practiced, doing the movements perfectly right from the beginning.

A brain-computer interface would enable more or less telepathic levels of communication. Rather than explaining to someone how you feel, you could simply transmit the emotion, directly; rather than telling them what you see, or snapping a picture and texting it, you could provide the raw feed from your visual cortex1 ... together with the sounds, smells, and texture, as appropriate. Communication would become a rich exchange of direct sensory and emotional data.

Of course, even in the absence of scheming totalitarians, it's easy to see how one's individuality might be lost in this raging storm of information. One's own tiny psyche might become a droplet of water dissolving in the vast ocean of networked humanity; the Borg emerging as a self-organizing, natural outcome of the technology, rather than being imposed from the top down. The thing is, though, that's a pretty obvious potential failure mode - so obvious that users of the technology will certainly be aware of it, and take steps to prevent it.

Even without going as far as the entirety of the species getting subsumed in the Borg, one could easily imagine severe psychological issues due to depersonalization and dissociation arising from excessive or irresponsible use. When you’re trading thoughts and memories back and forth directly on a regular basis, you might find yourself in a situation where you’re no longer really sure which thoughts and memories are yours. Paging Philip K. Dick.

I'd expect anyone who took the plunge into getting implanted with such a device to be deeply paranoid about security risks. You'd want to make sure that all communications were heavily encrypted, and that all the software was open source, so millions of eyeballs could check for bugs and exploits and verify that the manufacturer or the ISP or the government or a rogue criminal organization didn't have a back door via which they could shove whatever they wanted into your neurons (or extract whatever they wanted, or erase whatever they wanted....) without you even realizing. Trust would be a huge issue and no one is going to just take anyone's word for it; the Twitter Trust and Safety Council model isn't going to cut it.

And that gets to the other issue: adoption. It's pretty unlikely that this technology will be forced on people, and given that it requires actual surgery that touches a pretty intimate part of one's physiology, people will probably be very cautious about adopting it. We've had implantable RFID chips for tracking and security for like a decade now, and almost no one has one - partly because very few people think it's that much of an advantage over just using your card, and partly because a lot of people are creeped out by it. "Get this chip so we can track you everywhere" isn't the best marketing ploy.

NeuraLink will face similar issues to an exponential degree. Even after the technology goes live for human use, it will probably be quite a while before it's widely adopted ... if it ever is. Especially considering it's unlikely to be inexpensive: while the chip itself might be cheap once they're being mass produced (although that assumes they don't need to be customized, which they probably will be), surgical implantation will certainly not be affordable. That also means that for quite some time it will be available only to the wealthy. And you know who has real trust issues when it comes to personal privacy? Rich people.

When the future becomes the present it's almost never as terrifying as we feared, nor is it as cool as we hoped. It's almost invariably extremely banal. We were promised a space age and we got the ISS. We were promised a cyberpunk dystopia with bionic-armed street samurai and hot hacker chicks with neon hair; instead we got Facebook and obese bluehairs whining about misogynoir. We were looking forward to genetically engineered superhumans and we got RoundupReady corn. The technology gets developed, sure, but it turns out to be a lot more limited than we'd expected due to all those awkward engineering issues we didn't think of when it was still science fiction, because the real world is always messier and more intractably complicated than the simple models our left brains like to substitute for reality. Evil people try to use new technologies for evil things, as is ever the case; but as is ever the case, there are good people who push back and muck up their schemes, and their plans never quite work out the way the evil ones had hoped. By the same token, the technological miracles and social innovations developed by creative humans with good intentions get twisted and bent towards criminal purposes. Neither utopia nor dystopia is ever allowed to manifest, and that's all to the good, because it keeps the world from getting either boring or hopeless. There's always enough good to keep us in the game, and always enough bad to keep things interesting.

So is Elon Musk one of the good guys, or one of the black hats? Again: no idea. My main point here is that simply because he's developing a technology with potentially frightening uses is not an argument either way. That technology is coming whether anyone likes it or not, and preventing it from being used in the worst possible ways is going to require that we put real thought into how freedom of thought is maintained in an age of networked cortices. But it’s going to take a while yet before it’s even slightly operable, and when it comes online, my guess is that it will be nowhere near as amazing - or as terrifying - as people hope or worry it will be.

Interestingly, this may be more or less how dolphins communicate. Their "speech", according to one hypothesis, is not symbolic communication as we understand it, but is rather an adaptation of their sonar imaging capability. They simply relay images - or rather, three-dimensional, moving representations - directly via a sort of simulated sonar. If we ever learn to interpret this, it would be absolutely fascinating to know what their cultural memory has preserved.

"Ringwraiths of Washington" The Nazgûl darkness! I like the image a lot.

I think you are on the money about Musk - big borg future ahead - don't trust his motivations at all - but as you say - good guy or black hat? Don't know. Probably doesn't matter as the tech and the control is coming upon us regardless.

Also you touched on something I feel is important as well but of course no one says it out loud for fear of being labeled a religious nut case, and that's "there are powers and principalities active in this world for whom this level of neurospiritual tyranny is a wet dream." Lots to be said in this space.

Came across your Substack via comments on The Good Citizen. Thought I would check it out. Good article on Musk and technocracy. I have followed Patrick Wood on technocracy for several years. Will be linking your article today @https://nothingnewunderthesun2016.com/

Besides anyone that goes by the handle of John Carter and postcards from Barsoom can't be all bad. Always liked the John Carter and Tarzan stories when I was younger. The Frazetta art is perfect as well!!!!